Zoom Link: https://acm-org.zoom.us/j/99511898870

Abstract:

Today, videos captured and analyzed by computer vision models grow faster in volume than videos watched by human viewers. Despite that, traditional video delivery and processing systems are designed for human perception, whereas computer vision models (deep neural nets or DNNs) “perceive” video data differently—they value high inference accuracy and low inference delay. This discrepancy in performance objectives has far-reaching consequences. In this talk, I will introduce two new abstractions that enable video analytics systems to self-adapt to dynamic characteristics of input video streams and the idiosyncrasies of different DNN models. The key insight is that, while eliciting real-time feedback from human users is hard, DNN models can naturally provide rich and instantaneous feedback that could be harnessed to guide efficient system adaptation.

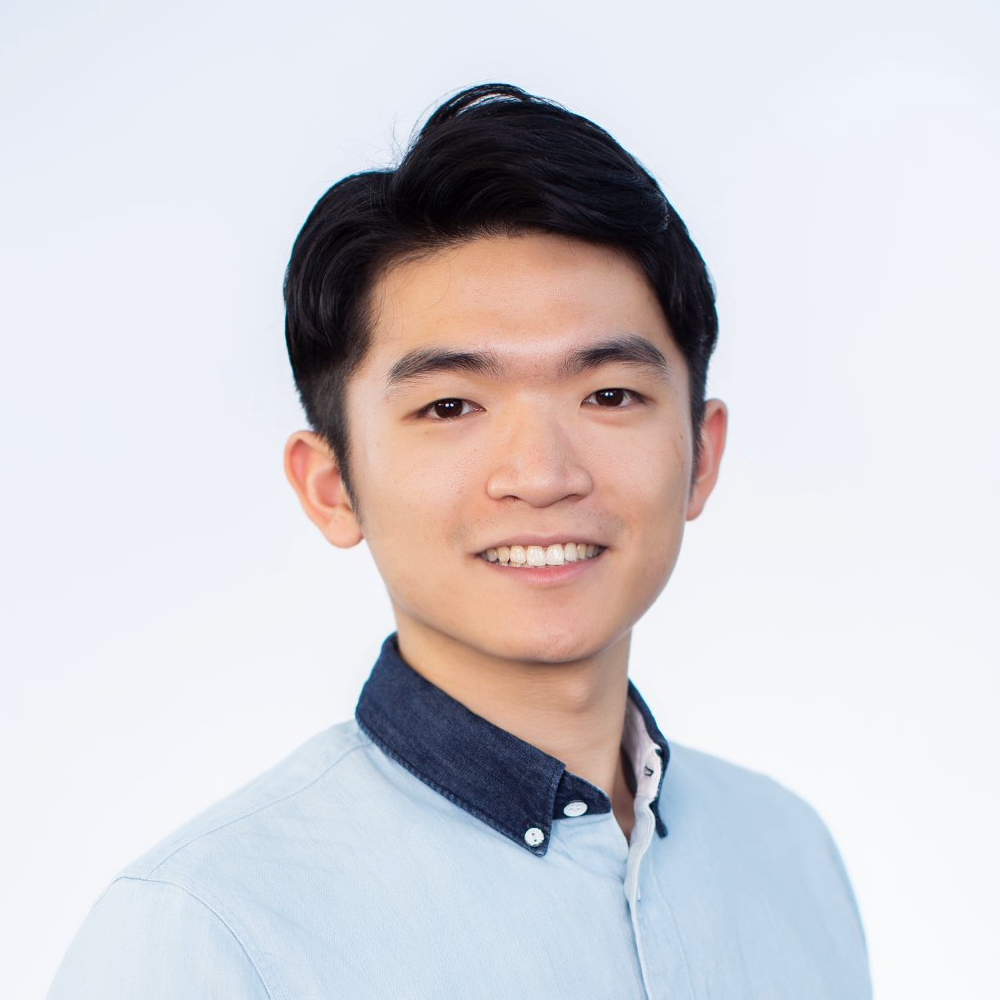

Junchen Jiang is an Assistant Professor of Computer Science at the University of Chicago. He received his PhD degree from CMU in 2017 and his bachelor's degree from Tsinghua in 2011. His research interests are networked systems and their intersections with machine learning. He is a recipient of a Google Faculty Research Award, NSF CAREER Award, and CMU Computer Science Doctoral Dissertation Award.

Please find the video recording of the talk: here